The House Of Data Series: Data Privacy

This paper focuses on data privacy as an operational discipline — classification, access control, retention, consent, and the governance structures that make privacy enforceable rather than aspirational. It does not cover regulatory compliance frameworks or security controls in depth — those are addressed in the Compliance and Data Security whitepapers.

.png)

Get the Best of Data Leadership

Stay Informed

Get Data Insights Delivered

House of Data Series

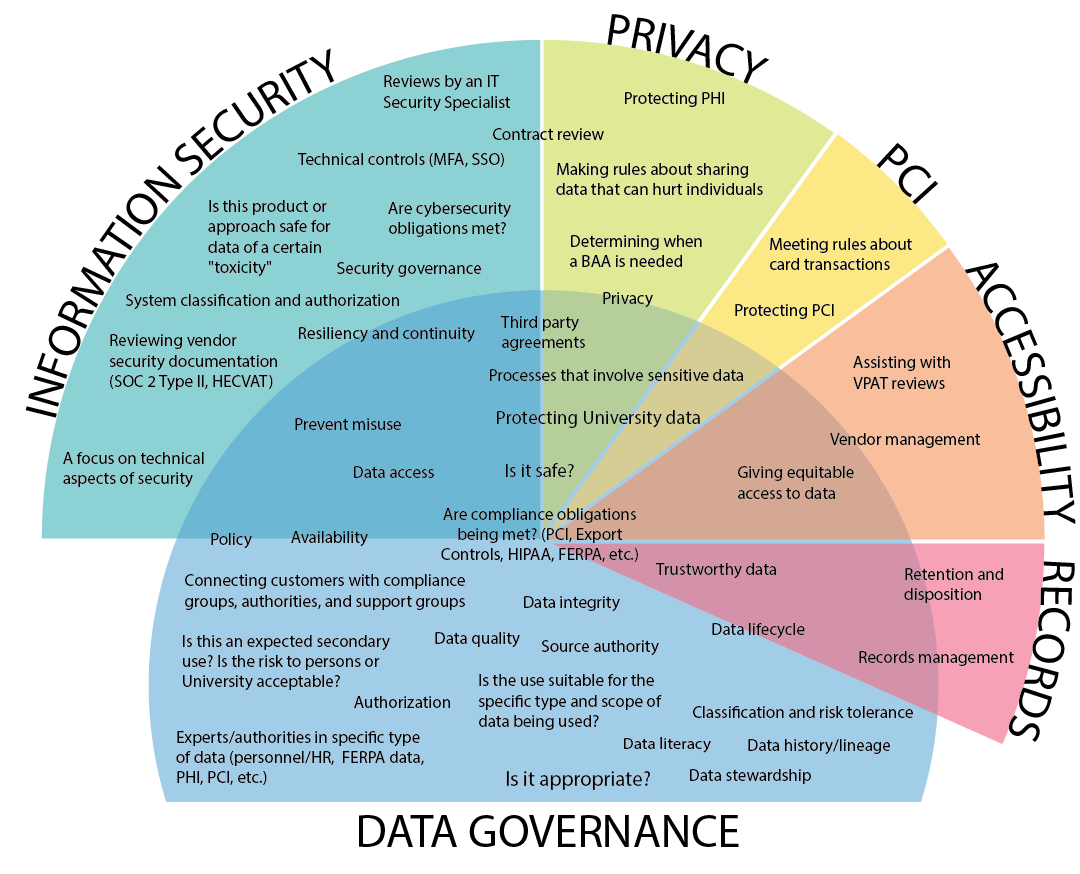

Every strong data program is built like a house. Data Architecture forms the foundation — the platforms, pipelines, and operating model that everything else depends on. Seven domain pillars rise from that foundation, each one essential to a complete data program: Data Quality, Privacy, Data Security, DataOps, Compliance, Data Enablement, and Data Consumption. Data Literacy runs across all seven as a connecting beam, ensuring people at every level can read, interpret, and act on data. At the top, People & Leadership sets the direction, accountability, and culture that holds the whole structure together.

This series of whitepapers covers each component of the House of Data in depth. Each paper was written by Jim Barker (Principal, Data Strategy), with support from a practitioner with direct experience in that domain. Together, they form a practical guide to building data programs that earn — and keep — trust.

This paper covers Data Privacy — the principles, classification frameworks, regulations, and operational practices that organizations must apply to handle personal data responsibly and maintain trust.

Data privacy

Privacy is the second pillar of the House of Data. Since GDPR came into force in 2018, data privacy has moved from a legal checkbox to a core operational discipline — and the rise of AI has added a new dimension: data sensitivity. Organizations now need to know not just what personal data they hold, but where it flows, how it's classified, and whether it's being used in AI systems in ways that create compliance or reputational risk.

Data privacy's importance has grown since 2018 when GDPR was rolled out in EMEA, and with the massive growth of AI in recent years. This intersection of AI and data privacy should be viewed as a new category: Sensitivity. This paper reviews some critical vocabulary, reminds the audience of the tactical action of privacy, and discusses why data privacy is vital to AI. It also includes reference to sensitivity.

Core definitions

Data privacy should be defined as the rights and expectations individuals have over how their personal information is collected, used, shared, stored, and protected. It ensures that people maintain control over their personal data and that organizations handle it in ways that are lawful, transparent, and respectful of individual consent. This includes acting on requests of the data owner on a timely basis in accordance with appropriate laws and regulations. — University of Chicago

Data sensitivity in the context of AI relates to the necessity of safeguarding certain information against unauthorized access, use, or disclosure due to the potential harm or adverse consequences its exposure could cause.

Artificial Intelligence (AI) should be defined as computational systems capable of performing tasks that typically require human intelligence, such as learning, reasoning, pattern recognition, language understanding, and decision-making, using data to improve their performance over time. Because AI systems rely heavily on data, they must be designed and operated in ways that protect sensitive, personal, or confidential information. — TechTarget

Sensitive data is any information that must be protected due to its confidential, personal, or financial nature and the harm that could come if it is disclosed, misused, or accessed without authorization or in violation of legal or compliance requirements governing its handling. — University of Virginia

Privacy characteristics

Data characteristics should be defined as either: (1) the identification of data as to its sensitivity due to its nature — is it personally identifiable, is it aligned to financial or commercial descriptors, is it related to health privacy, or is it related to trade secrets. These typically break down into: (1) PII — Personally Identifying Information; (2) PCI — Payment Card Industry; (3) PHI — Personal Health Information; and (4) Trade Secret Data.

The area of data characteristics continues to grow as legislation moves forward. The following are areas currently under consideration by legislatures worldwide. Data privacy characteristics are the fundamental attributes or principles that define how personal or sensitive data must be collected, used, protected, and managed to ensure the privacy rights of individuals and compliance with legal and ethical standards. This is expanded from the more recent view of PII, PCI, and PHI. It is based on core data privacy characteristics.

Core data privacy characteristics

- Purpose limitation — Data must only be used for the specific, legitimate purpose for which it was collected.

- Data minimization — Only the minimum necessary personal data should be collected, processed, or retained.

- Consent and choice — Individuals must have the ability to choose how their data is used and give informed consent when required.

- Transparency — Organizations must clearly communicate how data is collected, used, shared, and stored.

- Accuracy — Personal data must be kept correct, complete, and up to date.

- Confidentiality — Data must be protected from unauthorized access or exposure.

- Integrity — Data should not be altered or destroyed improperly; it must remain trustworthy and accurate.

- Accountability — Organizations must demonstrate compliance with privacy principles through governance, controls, and documentation.

- Individual participation and rights — People must be able to access, correct, delete, or object to the processing of their personal data.

- Storage limitation — Data cannot be retained longer than necessary for its intended purpose.

- Security safeguards — Technical and administrative controls must protect data from breach, misuse, or loss.

- Fairness and non-discrimination — Data must not be used in ways that cause unjust or discriminatory outcomes.

Expanded privacy vocabulary

Data privacy classification is the process of categorizing data based on its sensitivity, risk, and the relevant privacy regulations such as GDPR. This helps organizations determine the appropriate level of security and internal controls needed to protect the data from unauthorized access, use, or disclosure, ensuring it is handled efficiently and in compliance with legal requirements. This should be addressed by legal provisions including protecting individuals, organizations, and regulatory compliance.

Most firms spend time and legal resources to determine their data classifications. The following table shows a set of commonly used classifications:

General Data Protection Regulation (GDPR)

The General Data Protection Regulation (GDPR) is a comprehensive data protection and privacy law enacted by the European Union (EU) in May 2018 that governs how organizations collect, use, store, share, and protect personal data of individuals located in the EU or EEA. It gives individuals strong rights over their personal information and places strict obligations on organizations to handle that data responsibly, transparently, and securely.

Using GDPR as a focal point is appropriate because most privacy regulations worldwide used GDPR as a base for writing those laws.

A brief history

Data privacy is not new. It has a historical background rooted in the writings of Samuel Warren and Louis Brandeis in the Harvard Law Review article "The Right to Privacy," which argues for the individual's "right to be left alone." While this is a common quiz show question, those in the data field should remember it since it is core to discussions of data privacy — and as relevant today as it was in 1888. The rollout of GDPR changed the game, and since then a variety of rules in many jurisdictions have been released.

Core rights under GDPR

At the core, these areas of consensus are worthy of focus:

GDPR main sections

GDPR has 99 articles. The following table highlights the most important sections for privacy professionals. GDPR attempted to cover all aspects of the privacy topic, describing rights, how to track and address concerns, and the remaining articles are more limited in their approach — not less important, just less time consuming.

ROPA: Record of Processing Activities

ROPA stands for Record of Processing Activities. It is a mandatory documentation requirement under Article 30 of the GDPR — a detailed record that organizations must maintain describing what personal data they process, why they process it, how they process it, who they share it with, and how long they keep it.

Purpose: A ROPA helps demonstrate GDPR compliance to regulators and provides transparency into how personal data is used, stored, shared, and protected.

Typical contents include:

- Controller and processor details — who is responsible for the processing.

- Purpose of processing — why the data is being processed.

- Categories of data subjects — e.g., customers, employees, partners.

- Categories of personal data — e.g., contact info, payment details.

- Categories of recipients — who receives the data, including third parties.

- Transfers to third countries — and safeguards in place.

- Retention periods — how long data is kept.

- Security measures — a high-level description of protections in place.

Who needs it: Generally, any organization with 250 or more employees must keep a ROPA, but smaller organizations also need one if they process sensitive data or data that could pose a risk to individuals. Biometrics fall within this scope as well.

The privacy paradox: conflicts in practice

There are conflicts in the area of privacy. These include situations where someone wants their data deleted while other statutory requirements require retention of that data for regulatory purposes. The open question is: when this type of conflict arises, what do you do? The paradox is the right to have data delivered versus the courts' need for data to be retained for seven years (as an example).

The short answer: talk to your lawyers. As a data professional, don't try to be the expert in legal matters. As Obendieck puts it in Data Governance: Value Orders and Jurisdictional Conflicts, there are cross-jurisdictional requirements that need to be addressed. Most are not equipped to answer these legal questions — get legal help. Let the legal resources of your organization do their job. Those legal resources exist to handle these situations. View the legal resources as your help. They exist to help you and protect your firm.

Access

Just like your legal team is a valuable resource for privacy matters, the Infosec (Information Security) team is also a resource that should be used to push forward privacy needs. Many firms find that securing the most privacy-sensitive data is of high importance. The tools that can restrict access to sensitive data should be considered. If data is highly sensitive, the available access restraints are important. InfoSec teams should use the characteristics (PII, PCI, PHI) and classifications (Public, Private, Internal, Sensitive, Restrictive) as part of the data access process — this is not only appropriate but very wise. It will save firms money from fines and penalties. The use of limits helps set business teams up for success, makes the data available, and prevents situations where all access is removed due to bad actors using privacy-sensitive data incorrectly.

Accountability

The CISO (Chief Information Security Officer), CPO (Chief Privacy Officer), and GRC (Governance, Risk, and Compliance) are accountable for the use of data within privacy regulations. Let them help you. Further, provide the necessary reporting and transparency to manage risk accordingly.

Transparency

It is vital to be transparent when bad things happen. Key areas requiring transparency include:

- Privacy-related requests — Understand who, what, and when privacy requests were submitted.

- Privacy violations — What happened, when, and who was involved, and what are our next steps.

- Access requirements for privacy-sensitive objects — Who is requesting privacy-sensitive data, when was it requested, what is the purpose of using that data, and how do we verify that the privacy-sensitive objects are being used appropriately.

- Violations and fines — What violations have occurred, what are the specifics (what, who, when, why), and what are we doing for corrective action.

Data privacy activities

As previously stated, customers, vendors, and employees have a set of rights. Each of these rights can generate requests that need to be processed. These requests can include (this list is not exhaustive):

- Please delete my tax information.

- Please delete all my data.

- Please don't profile my personal information.

- Please don't sell my personal information.

- Please don't process my personal data.

- Please delete my personal data and forget that I was ever your customer.

- Please don't share my personal data.

- Please fix my data — it is incorrect.

In these situations the request should be logged, tracked, addressed, and communicated back to the requestor. These are what could be called privacy actions.

Privacy action aging

It is required to have a set of aging reports that show what privacy actions have been requested, what have been completed, and what have been communicated back to the requestor. Additionally, reports should be generated and shared with leadership including:

- Number of requests

- Time to process requests

- Number of required activities that are outstanding

- Number of requested activities that took longer than service-level expectations

- Number of requested activities by request type

- Number of completed tasks by customer

Lineage benefits to privacy

Lineage provides benefits in many of the privacy actions. While privacy is the most overlooked use case for lineage, it can also reap some of the most important benefits. By leveraging a lineage graph when a privacy action is requested, you can show the provenance of the data and the impact of removing the data. You may still need to make the change, but lineage can provide details as to the impact of the requested change and help expedite the change itself.

If we change the data in a Customer table, lineage shows what reports and processes will be impacted. Likewise, if data appears in a report, lineage shows where it came from — which will require the necessary changes to meet the privacy action request.

.png)

AI trust

One of the pillars of AI Trust is data sensitivity, which is closely related to data privacy. The idea behind data sensitivity is a core question: "Is our data being used appropriately by our AI application?" or "Is any data being used by AI that shouldn't be?" Firms need to be able to determine what the sharing profile or classification is of data, and only allow data in the appropriate classes to be used for AI efforts. The connection between the sensitivity of data use and the classification of data from a privacy perspective are the same challenge.

How do we classify our data to only use — or allow access to — data deemed "not sensitive" for the transform and execution of AI processes? The key point is to classify your data and monitor its use so that no data is ever used by AI that isn't authorized. In the area of AI Trust, data sensitivity and data privacy need to work together to control the use of sensitive data and ensure AI trust.

AI and data privacy

The intersection of AI and data privacy does not get nearly enough coverage. Most firms have a solid grasp on data privacy, or at least awareness of it. Many firms have substantial interest in progressing AI projects. The challenge is that too many firms don't look at AI and privacy together. They should.

Consider this scenario: a business user runs an analytical report in Power BI, Looker, or Tableau and generates a file. Then they load the file into an AI tool to create a report. They get useful results — but they just put your list of top customers into the public domain for your biggest competitors to exploit. This applies to any AI tool.

Firms need to be careful of the use of data in the world of AI. They need to identify what data can be shared (classification) and what data is sensitive to use (characteristics). End users need to be educated on what data they can put into a generative AI solution and on the risks of data use inside of AI. This doesn't mean not using it, but having trained staff and collecting details that identify inappropriate usage of data inside AI applications. It is important to track, maintain, and ensure the proper use of AI related to privacy concerns.

Bigeye's role in privacy

Bigeye's role in the world of privacy continues to shift as the rules and regulations around the world change. The main pieces of functionality that customers leverage for privacy are Sensitive Data Scanning, Profiling, and Lineage.

Sensitive Data Scanning provides the capability to see any columns of data that contain sensitive data across a variety of classifiers. Data privacy professionals run an SDS scan on a set of data, review what data is deemed sensitive, add records to the privacy risk registry, and take action. This work tends to happen as part of a monthly audit.

Additionally, the role of data profiling falls into the data privacy set of activities. By running a data profile, a set of patterns can be detected for additional analysis of data to help identify what data can be ignored and what requires additional research and action.

Finally, lineage helps to identify where data came from and where it goes. Data privacy professionals find it incredibly useful in detecting the source of the problem to take action and remove items off of the data privacy risk registry.

Summary

Data privacy is an area that tends to change and expand due to multi-jurisdictional changes in legislation. It requires robust attention to understand what data exists, what data is of privacy concern, how data is used, and what data must be changed due to privacy action requests. Bigeye has a set of tools that can lower the lift of data privacy professionals and reinforce the focus areas around data privacy. It is important that data stewards, data security analysts, and data privacy professionals work together to monitor and improve the data privacy posture to prevent fines and other negative events.

Appendix:

Privacy classification structure

Privacy classification is the process of categorizing data or information based on its sensitivity and the level of protection it requires. It's a core part of data governance and regulatory compliance frameworks like GDPR, HIPAA, and ISO 27001. A typical structure for privacy classification:

- Public — Information that can be freely disclosed without harm. Examples: published press releases, public website content, marketing brochures. Protection level: minimal or none. Access: available to anyone.

- Internal / Proprietary — Non-sensitive business information meant only for internal use. Examples: internal process documentation, standard operating procedures, internal memos. Protection level: low. Access: employees and trusted contractors.

- Confidential — Sensitive business or personal information that could cause harm if disclosed. Examples: customer lists, internal financial reports, contracts, non-public product roadmaps. Protection level: medium — requires secure storage and controlled access. Access: limited to authorized individuals with a business need.

- Restricted / Highly Confidential — Information of the highest sensitivity; unauthorized access could cause severe harm, regulatory penalties, or reputational damage. Examples: PII, health records, trade secrets, encryption keys. Protection level: high — encryption in transit and at rest, strict access controls, monitoring. Access: only essential personnel; requires explicit approval.

- Special Category (GDPR context) — Personal data that's especially sensitive under GDPR Article 9. Examples: racial or ethnic origin, political opinions, religious beliefs, biometric data, sexual orientation, health data. Protection level: very high — must meet additional legal requirements for collection, processing, and storage. Access: restricted to specific roles with lawful basis and documented consent.

Privacy characteristics reference

Privacy characteristics are the key qualities or attributes that determine how well personal or sensitive information is protected, managed, and used:

- Confidentiality — Ensuring that personal data is only accessible to authorized individuals or systems. Protects against unauthorized disclosure.

- Integrity — Safeguarding the accuracy and completeness of personal data. Prevents unauthorized alterations or tampering.

- Availability — Making sure personal information is accessible when legitimately needed. Balances protection with usability.

- Purpose limitation — Collecting and using data only for specific, explicit, and legitimate purposes. Prohibits secondary uses without consent.

- Data minimization — Gathering only the data necessary for the intended purpose. Reduces risk of exposure.

- Transparency — Clearly informing individuals how their data is collected, used, shared, and stored. Includes privacy notices and clear policies.

- Consent and control — Allowing individuals to make informed choices about their data. Includes the right to opt-in, opt-out, and withdraw consent.

- Accountability — Organizations must take responsibility for complying with privacy laws and best practices. Demonstrated through governance, documentation, and audits.

- Security — Implementing technical and organizational measures to protect personal data from breaches. Includes encryption, access controls, and monitoring.

- Individual rights enablement — Supporting rights such as access, correction, deletion, and portability. Complies with frameworks like GDPR, CCPA, etc.

Full list of GDPR articles

By reviewing GDPR and its many articles, the breadth and depth of data privacy can be better understood. The articles that privacy professionals spend the most time addressing are: 30, 16, 17, 18, 20, 21, 15, 19, and 22. The full list for reference:

General Provisions

Article 1 – Subject matter and objectives; Article 2 – Material scope; Article 3 – Territorial scope; Article 4 – Definitions

Principles

Article 5 – Principles relating to processing of personal data; Article 6 – Lawfulness of processing; Article 7 – Conditions for consent; Article 8 – Conditions applicable to child's consent in relation to information society services; Article 9 – Processing of special categories of personal data; Article 10 – Processing of personal data relating to criminal convictions and offenses

Rights of the data subject

Article 12 – Transparent information, communication, and modalities for the exercise of rights; Article 13 – Information to be provided where personal data are collected from the data subject; Article 14 – Information to be provided where personal data has not been obtained from the data subject; Article 15 – Right of access by the data subject; Article 16 – Right to rectification; Article 17 – Right to erasure ("Right to be Forgotten"); Article 18 – Right to restriction of processing; Article 19 – Notification obligation regarding rectification or erasure of personal data or restriction of processing; Article 20 – Right to data portability; Article 21 – Right to object; Article 22 – Automated individual decision-making, including profiling; Article 23 – Restrictions

Controller and processor

Article 24 – Responsibility of the controller; Article 25 – Data protection by design and by default; Article 26 – Joint controllers; Article 27 – Representatives of controllers or processors not established in the Union; Article 28 – Processor; Article 29 – Processing under the authority of the controller or processor; Article 30 – Records of processing activities; Article 31 – Cooperation with the supervisory authority; Article 32 – Security of processing; Article 33 – Notification of a personal data breach to the supervisory authority; Article 34 – Communication of a personal data breach to the data subject; Article 35 – Data protection impact assessment; Article 36 – Prior consultation; Article 37 – Designation of the data protection officer; Article 38 – Position of the data protection officer; Article 39 – Tasks of the data protection officer; Article 40 – Codes of conduct; Article 41 – Monitoring of approved codes of conduct; Article 42 – Certification; Article 43 – Certification bodies

Transfer of data

Article 44 – General principle for transfers; Article 45 – Transfers on the basis of an adequacy decision; Article 46 – Transfers subject to appropriate safeguards; Article 47 – Binding corporate rules; Article 48 – Transfers or disclosures not authorized by Union law; Article 49 – Derogations for specific situations; Article 50 – International cooperation for the protection of personal data

Independent supervisory authorities

Article 51 – Supervisory authority; Article 52 – Independence; Article 53 – General conditions for the members of the supervisory authority; Article 54 – Rules on the establishment of the supervisory authority; Article 55 – Competence; Article 56 – Competence of the lead supervisory authority; Article 57 – Tasks; Article 58 – Powers; Article 59 – Activity reports

Cooperation and consistency

Article 60 – Cooperation between the lead supervisory authority and other authorities concerned; Article 61 – Mutual assistance; Article 62 – Joint operations of supervisory authorities; Article 63 – Consistency mechanism; Article 64 – Opinion of the board; Article 65 – Dispute resolution by the board; Article 66 – Urgency procedure; Article 67 – Exchange of information; Article 68 – European data protection board; Article 69 – Independence; Article 70 – Tasks of the board; Article 71 – Reports; Article 72 – Procedure; Article 73 – Chair; Article 74 – Tasks of the chair; Article 75 – Secretariat; Article 76 – Confidentiality

Remedies, liability, and penalty

Article 77 – Right to lodge a complaint with a supervisory authority; Article 78 – Right to an effective judicial remedy against a supervisory authority; Article 79 – Right to an effective judicial remedy against a controller or processor; Article 80 – Representation of data subjects; Article 81 – Suspension of proceedings; Article 82 – Right to compensation and liability; Article 83 – General conditions for imposing administrative fines; Article 84 – Penalties

Provisions relating to specific processing situations

Article 85 – Processing and freedom of expression and information; Article 86 – Processing and public access to official documents; Article 87 – Processing of the national identification number; Article 88 – Processing in the context of employment; Article 89 – Safeguards and derogations relating to processing for archiving purposes in the public interest, scientific or historical research, or statistical purposes; Article 90 – Obligations of secrecy; Article 91 – Existing data protection rules of churches and religious associations; Article 92 – Exercise of the delegation; Article 93 – Committee procedure; Article 94 – Repeal of Directive 95/46/EC; Article 95 – Relationship with Directive 2002/58/EC (ePrivacy Directive); Article 96 – Relationship with previously concluded agreements; Article 97 – Commission reports; Article 98 – Review of other Union legal acts on data protection; Article 99 – Entry into force and application.

References

- IBM: What is data privacy

- Cloudflare: What is data privacy

- Dataversity: What is data privacy

- CrowdStrike: Data privacy

- TechTarget: Data privacy / information privacy

- Northeastern University: What is data privacy

- Ohio University: Defining sensitive data

- Spirion: How to determine the sensitivity of information

- IT Governance: Personal data vs. sensitive data under GDPR

- NIST: Sensitive information glossary

- SailPoint: Sensitive data

- University of Virginia: Definitions of sensitive data

- European Commission: What personal data is considered sensitive

- Carnegie Mellon University: Data classification guidelines

- University of Illinois: Data classification

- Michigan Tech: Data classification and protection standards

- Spirion: Data classification

- NIST IR 8496: Data classification

- University System of Maryland: Data privacy foundations

- University of Chicago: Data classification standard

Monitoring

Schema change detection

Lineage monitoring

What's the difference between data privacy and data security?

Data security is about preventing unauthorized access to data. Data privacy is about ensuring that authorized access and use is lawful, appropriate, and consistent with individuals' rights. A database can be perfectly secure and still contain serious privacy violations if it retains data beyond its permitted purpose or uses it in ways the individual didn't consent to. Both disciplines are necessary, and they require different tools, different policies, and different expertise.

What does GDPR compliance actually require from the data team versus legal?

Legal owns the interpretation of obligations and the response to regulators. The data team owns the operational infrastructure that makes compliance possible: maintaining the ROPA, executing deletion and access requests accurately and within time limits, implementing data classification, controlling access to sensitive data, and providing the reporting that legal and the CPO need to demonstrate compliance. Neither team can do their job well without the other. The clearest failures in data privacy compliance happen when these teams operate independently.

How does data lineage help with privacy compliance?

When a deletion or access request comes in, the immediate challenge isn't technical execution — it's knowing the full scope of where that data exists. A customer record that entered your CRM three years ago may now exist in a data warehouse, a reporting table, an ML training dataset, and a downstream dashboard. Lineage maps all of those dependencies before you start making changes, so you can respond accurately and completely rather than deleting one instance and missing five others. It also helps you demonstrate to regulators that your response was thorough.

How does data sensitivity relate to AI governance?

Data sensitivity asks whether a given dataset is appropriate to use in an AI system. The answer depends on how the data is classified, what consent was obtained when it was collected, and whether the intended AI use case falls within that consent. This is essentially privacy classification applied to a new surface area. Organizations with mature data classification programs have a significant head start on AI governance, because the foundational work is the same: know what sensitive data you have, know where it flows, and control what systems can access it.

.png)

.png)

.png)