The House of Data Series: Compliance

This paper focuses on how data programs establish and maintain compliance through policy, standards, audits, and continuous improvement frameworks. It does not cover security controls, privacy program design, or pipeline operations in depth — those are addressed in the Data Security, Privacy, and DataOps whitepapers.

.png)

.png)

Get the Best of Data Leadership

Stay Informed

Get Data Insights Delivered

House of Data Series

Every strong data program is built like a house. Data Architecture forms the foundation — the platforms, pipelines, and operating model that everything else depends on. Seven domain pillars rise from that foundation: Data Quality, Privacy, Data Security, DataOps, Compliance, Data Enablement, and Data Consumption. Data Literacy connects them all as a beam, ensuring the organization can read and act on data. At the top, People & Leadership provides the direction and accountability that holds everything together.

This series of whitepapers covers each component in depth. Each paper was written by Jim Barker (Principal, Data Strategy), with support from a practitioner with direct experience in that domain.

This paper covers Compliance & Oversight — the pillar responsible for the policies, standards, and verification mechanisms that keep data programs operating correctly. Compliance isn't about catching people doing things wrong. It's about building systems where doing things right is the path of least resistance.

Compliance & oversight

Great data programs have a way they operate. They have norms & mores and provide oversight to ensure processes are followed correctly.

Trust but Verify, Ronald Reagan

Trust but verify is a mantra for compliance around data, based on the Russian saying "doveryai, no proveryai." It sets a standard of providing abilities to wide ranges of people in your organization to do their job, and verify things are being done correctly, rather than having to put aggressive controls in place.

This white paper covers material involving standards, policies, how-to's, and oversight.

Policies, standards & oversight

There is a wide range of capabilities that firms need to do with data. These include:

- Data Security — grants & revoking access, reviews of appropriate use of data, continued authorization, and addressing audit needs

- Data Privacy — manage what data can be used for what purposes, taking action on privacy requests, and helping to prevent the firm from getting fined and penalized by failing to follow local and international privacy regulations

- Data Quality — perform a wide range of actions to provide data and assets that can be trusted and fit the business need

- DataOps — the building and running of core data sets to acquire, transform, and make available data capabilities in a predictable fashion

- Data Enablement — provide the support for the data community, roll out new data capabilities, and provide a conduit for addressing all data capabilities

- Data Consumption — provide solutions and capabilities to the business community to answer business questions, predict the future, and run processes within normal parameters

For the business to run well there is a set of rules, norms, and mores that need to be followed. As described in the policy pyramid, the content for levels of action:

Policies — the foundation rules established that all operations are built on. These are the basic DNA of the organization.

Standards — the operational guidelines of how things are done. While policies define the structured rules, standards are used to set organizations up for success.

Standard Operating Procedures (SOP) or Processes — the how-to's or operational guidelines established on how everything is done. Standards produce guidelines and SOPs give instructional guidelines on how the business works.

Oversight — the set of mechanisms put in place to monitor and verify that things are being done as expected, and when they are not, provide the support to help things improve to follow standard procedures.

Trust but Verify — an interactive way to look at Policies, Standards, and SOPs and operate in the firm's best interest. Implement in a manner where oversight is a verification step, and when processes aren't followed correctly, the oversight function simply identifies training opportunities and helps to improve behavior.

Audit — the formal mechanics (both internal & external) to verify that the company is doing things within the operating guidelines. Not all policies, standards, and SOPs are going to be audited, but these are the areas that get special attention:

How-To's — a detailed set of documents used to help people do their jobs. Unlike policies, standards, and SOPs, which are formal documents, how-to's describe in business terms how to complete a task. These documents should not be cryptic or filled with legal terminology, but use common business language and make it possible for anyone to complete a task.

The goal of Policies & Oversight is to be helpful, not hurtful. Often Data Governance and the role of policies get a bad reputation. Staff feel that this is a "gotcha" approach — trying to catch people violating operating norms to enforce 100% compliance is the wrong way to operate. It is hurtful. The goal of this area is to be helpful, to build muscle memory on how things are to be done, and get everyone working together.

Good governance teams have a focus that is helpful. They are established to give staff the tools they need to be successful and provide the business with a set of tools to make their jobs easier. This idea is expanded in the Data Enablement whitepaper.

Hurtful story

A data security lead, tired of security threats and following a ransomware attack on a competitor, hatches this plan and sends out the following note:

"You as a valued employee of Minnco have been granted a reward for great service over the last year. Go to this LINK and enter your information to choose a gift for your service. Thank you for all you do."

For the employees that click on the link, they get a warning message from InfoSec, are signed up for a mandatory data security refresher course, and their name is presented to senior leadership.

While this seems like a great idea, it's not. It makes people feel badly, it makes InfoSec work seem toxic, and drives down morale. The goal was to be helpful and protect the firm, but in reality it was hurtful and reduces people's willingness to point out issues when they arise.

Helpful

The teams that are supporting data — such as Data Governance, Data Stewardship, Data Privacy, Data Security, and others — should make widely available a set of How-To's and policy documents that are easy to find. These can include a library of How-To's, a business glossary, a "Help Me" inbox, and an internal AI prompt capability, to help provide the right information and efficiently get answers from subject-matter experts.

Spending time, talent, and resources to provide helpful tools is a very different experience from running "gotcha" activities.

Oversight and helpful policy considerations

When rolling out policies, the best policies are the ones that people don't need to know about yet still follow. The idea is using tools and techniques that allow users to do their job and follow the policy without them knowing it.

As you execute through key areas of data, think about how you can provide the necessary interfaces that allow policies to be followed with little to no effort or awareness.

Oversight takes two forms:

- Manual — a set of manual checks that the data governance or other supporting teams execute to verify that policies are being followed. While manual efforts are not preferred, sometimes you need to make the effort to "check."

- Automated — whenever possible, use automated checks, reports, or AI to evaluate that a process is being followed. When a process isn't being followed, be careful about using automated responses, as sensitivity to people's emotions is important. As process gaps frequently occur, work with your councils to brainstorm and build out solutions to address these gaps.

It is recommended to use your community of practice meetings to share the types of policy challenges that have been encountered and what the data organization is doing to improve them. It is important to celebrate these successes.

Benefits of lineage

When discussing lineage, it is important to discuss the term provenance: Data provenance is the ability to trace the origin of data and identify how it has been altered or transformed throughout its lifecycle.

Audit is very involved in oversight. Auditors will often ask questions like: "This report is showing x metric — how can we tell if it was using sensitive data or was calculated correctly?"

In those cases, lineage can provide the provenance of data: where it came from, how the metric was manipulated, and in some cases when it changed. This is one of the great areas where lineage can be helpful.

Lineage is a great vehicle for these answers. By looking at lineage graphics you can answer these sorts of questions. This idea of viewing where something originated from, or how it was calculated, is called root-cause analysis of provenance. Many finance leaders champion the role of lineage to help answer auditor questions. Often, showing a lineage graph can answer the auditors' questions so they can move on to the next topic. That is a great benefit.

One banking CDO once indicated that lineage changed the game of audits — they didn't know how they ever lived before lineage. Statutory requirements have required lineage for years, and this is an area that continues to expand.

Six Sigma tools in compliance — check lists

Compliance, while playing an oversight role, also fosters a reputation for continuous process improvement when done correctly. To that end, some Six Sigma tools can provide benefits. The two that most notably come to mind are: (1) Checksheets; (2) Control Charts.

Checksheets are a fairly simple document used for collecting data in real-time. While used heavily in manufacturing, in the data world — rather than watching someone complete a task — a checksheet can be a specialized form of telemetry that captures business events relative to the creation, maintenance, and use of data. It helps compliance by knowing: (1) how many of X are created; (2) how many updates there have been; (3) how many staff members have completed a task. These can also be used in running chairsides.

Chairsides: An exercise where a training, compliance person, or auditor watches in real time an individual complete a task. This isn't used to monitor the individual but to find challenges in the processes and tools available in task execution.

Classification Check Sheets — check sheets that keep track of a sub-category of an event. These might track how many: (1) Sales Orders; (2) Shipments; (3) Invoices are collected as part of analysis into the effort to complete such a task.

Frequency Check Sheets — this type of check sheet keeps track of how many times an event takes place over a longer period of time. It is helpful to understand the size and scope of the work effort. It can help compliance figure out if staff are being asked to complete redundant, non-value-add tasks that could be better automated for improved efficiency.

Measurement Check Sheets — another type of check sheet that captures more precise details, or aggregates of business events. This is often viewed by staff members, by transaction, and provides great insight into the accuracy and efficiency of everything that is done by staff.

Check List (Procedural) — these are less about collecting data and more about helping staff complete tasks. They are often a job aid or how-to that lists the steps in completing a task. Some examples include: (1) Submitting a help-desk ticket; (2) Creating a sales order; (3) Correcting financial transactions post-books close.

In short, check lists help to understand what is happening, to make better decisions for simplified tasks and increased efficiency.

Six Sigma tools in compliance — control charts

Control charts are another capability pioneered by Shewhart. They are often used to measure effectiveness and take two main forms: (1) Process Flow — documenting the flow of a business process; (2) Statistical Analysis — capturing and reporting on the reliability of the process documented in the process flow.

The main components of a control chart are:

- Control limits — the UCL and LCL establish natural boundaries for variation in the process. Any points outside these limits suggest an assignable cause to address for improvement.

- Data points — each point on the chart represents a data measurement from the process, such as defect counts, dimensions, etc. Tracking these points over time allows monitoring of process performance.

Role of policies & oversight in AI trust

In order to trust AI processes you need to trust your data. In AI trust, there are three main areas to consider:

- Data Quality — the idea that the data being used by AI has been reviewed, verified, and has good data quality. That is, the data is "fit for purpose" — if it isn't of high quality it shouldn't be used.

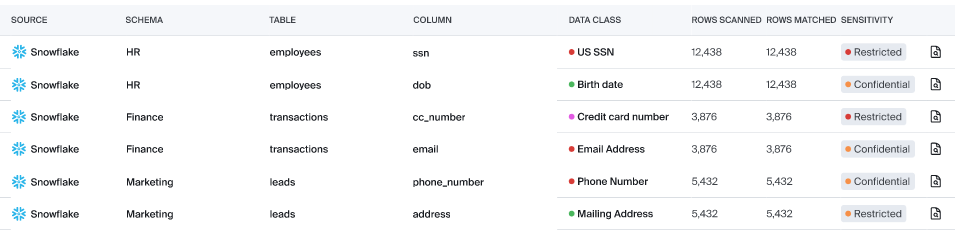

- Data Sensitivity — closely related to data privacy, but sensitivity asks the question: Should this data be shared or not? If the data falls in a restricted category that is private, confidential, restricted, or internal, it shouldn't be used by AI.

- Data Certification — the idea that data should be reviewed and certified for its AI trust level. Can, or better yet should, this data be used by AI? There may be times where data that isn't certified needs to be used, but risks must be taken into account and more human involvement is critical.

To make AI trust work, firms need to have rules that are followed to meet the goals and objectives of data quality, sensitivity, and certification.

Policy standards and SOPs are the rules that provide the foundation for AI trust. Oversight is the mechanism to see if AI trust exists for AI solutions and the data being used by AI. Therefore, following standards and SOPs is the foundation of AI trust.

In a manner similar to other areas of data governance, policies and oversight are critical. When there are humans involved, there is a high probability that someone will flag when they have access they shouldn't, or if they are accessing data that should be more widely available. Machines or algorithms don't have that ethical function — no street smarts, so to speak. Due to this, it is critical that effective policies are put in place and overseen to focus on them.

To have AI trust you must keep the appropriate controls in place to protect the firm from the inappropriate use of data within AI. Having policies and enforcement of data governance is a critical function for achieving AI trust.

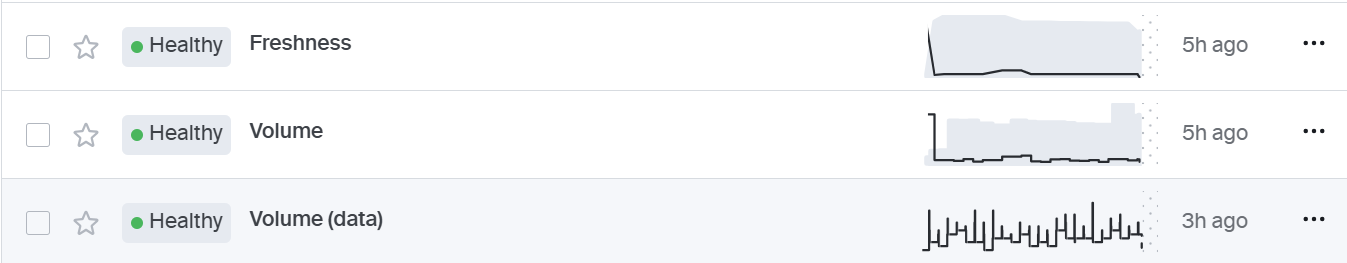

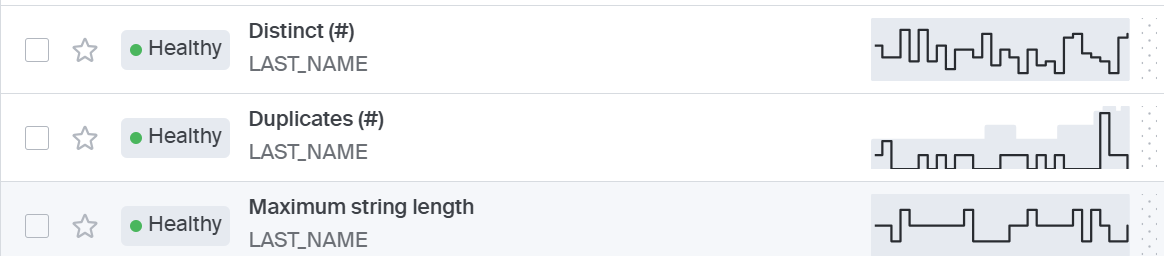

Bigeye's role in policies & oversight

Bigeye has a long history of using machine learning to address data quality. It helps to understand if data is current through Pipeline Reliability capabilities, and it determines if the data is of high quality, checking on data across Completeness, Conformity, Consistency, Uniqueness, and Timeliness. These checks help to address the first pillar of AI trust — Data Quality. Further, with the introduction of Sensitive Data Scanning (SDS), Bigeye can determine if data that is sensitive from a privacy point of view exists in the table. Additionally, Bigeye provides the ability to certify data based on its quality, sensitivity, and level of use.

Summary

Compliance is the act of oversight. It reviews operations — either in real-time or at different points in time — and verifies that the business is operating with data in a proper manner. There is always an attempt to do this without being too controlling or too time-consuming, but the important part is to find out when processes aren't being followed before they become a problem. Most firms will be audited, such as through SOC2, to verify processes are being followed. An established compliance function simplifies and lowers risk to both operations and audit functions.

References

International Organization for Standardization (ISO). (n.d.). ISO standards on data quality. https://www.iso.org/search.html?PROD_isoorg_en%5Bquery%5D=data%20quality

IMSM. (n.d.). The importance of ISO certification. https://imsm.com/

Hazel, L. (n.d.). About data provenance. University of Washington. https://faculty.washington.edu/hazeline/ProvEco/generic.html

Khatri, V., & Brown, C. V. (2010). Data governance: The missing approach to improving data quality. California Management Review, 52(2), 86–103. https://www.proquest.com/openview/4b405a8360f99610460c0640fc680668

Spiekermann, S., & Cranor, L. F. (2009). Bootstrapping privacy compliance in big data systems. IEEE Security & Privacy. https://ieeexplore.ieee.org/document/6956573

Behl, A., et al. (2021). Life of compliance management. CYBER 2021 Conference Proceedings. https://personales.upv.es/thinkmind/dl/conferences/cyber/cyber_2021/cyber_2021_1_140_80090.pdf

Gao, J., et al. (2019). Data capsule: A new paradigm for automatic compliance management. In Data and Applications Security and Privacy XXXIII (pp. 1–15). Springer. https://link.springer.com/chapter/10.1007/978-3-030-33752-0_1

Rai, A., & Tang, X. (2018). Governance of big data collaborations: How to balance innovation and control. Technological Forecasting and Social Change, 136, 14–24. https://www.sciencedirect.com/science/article/abs/pii/S0040162517314695

Palladino, N. (2019). Compliance and data protection in the digital age. MediaLaws. https://www.medialaws.eu/wp-content/uploads/2019/04/2_2019_Palladino.pdf

Antón, A. I., et al. (2010). Compliance with data privacy laws. IEEE Computer, 43(2), 58–64. https://ieeexplore.ieee.org/document/5386612

Ashley, K. D. (2017). Using artificial intelligence to support compliance. Artificial Intelligence and Law, 25, 1–3. https://doi.org/10.1007/s10506-017-9206-9

Six Sigma Daily. (n.d.). Check sheets: Five basic types. https://www.sixsigmadaily.com/check-sheets-five-basic-types/

SixSigma.us. (n.d.). Control charts: Six Sigma ultimate guide. https://www.6sigma.us/process-improvement/control-charts-six-sigma-ultimate-guide/

Monitoring

Schema change detection

Lineage monitoring

.png)

.png)

.png)